Showcasing LLM Performance: How Research Papers Present Evaluation Results

Showcasing LLM Performance: How Research Papers Present Evaluation Results

Showcasing LLM Performance: How Research Papers Present Evaluation Results

Building a high-performing LLM is only part of the challenge. Equally important is how its performance is communicated. Leading research papers do not rely on claims — they rely on structured benchmarks, transparent methodology, and measurable comparisons.

Here is how strong evaluation reporting is typically presented.

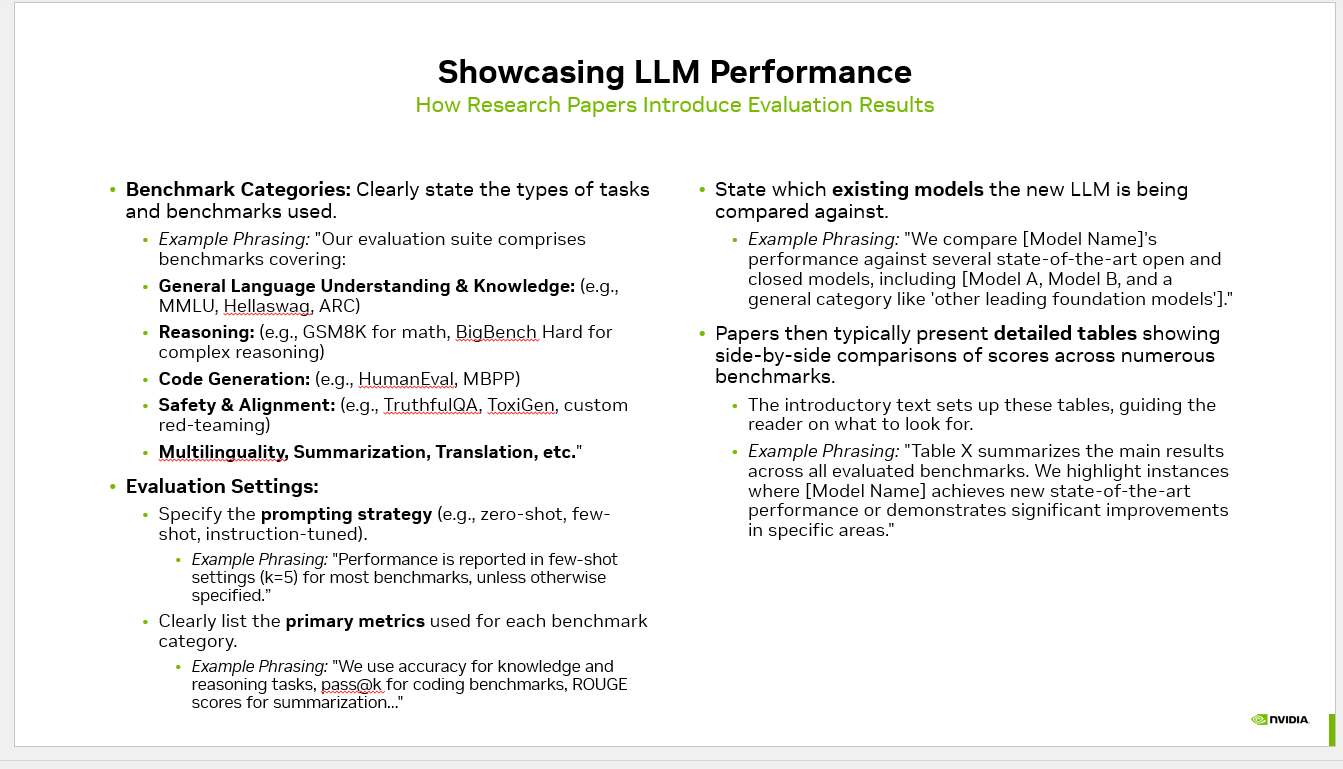

1. Clearly Defined Benchmark Categories

Top-tier research begins by explicitly defining what is being evaluated. Benchmarks are grouped into well-structured categories to ensure clarity and reproducibility.

Common categories include:

General Language Understanding & Knowledge

(e.g., MMLU, HellaSwag, ARC)

Reasoning

(e.g., GSM8K, BigBench Hard)

Code Generation

(e.g., HumanEval, MBPP)

Safety & Alignment

(e.g., TruthfulQA, ToxiGen, red-teaming datasets)

Multilinguality, Summarization, Translation, and related tasks

This structured categorization builds credibility and allows others to reproduce results with confidence.

Hear it before you finish reading

Talk to a live CallSphere AI voice agent in your browser — 60 seconds, no signup.

2. Transparent Evaluation Settings

Performance metrics without context are meaningless. Strong research papers clearly document the evaluation setup.

flowchart LR

PR(["PR opened"])

UNIT["Unit tests"]

EVAL["Eval harness<br/>PromptFoo or Braintrust"]

GOLD[("Golden set<br/>200 tagged cases")]

JUDGE["LLM as judge<br/>plus regex graders"]

SCORE["Aggregate score<br/>and per slice"]

GATE{"Score regress<br/>more than 2 percent?"}

BLOCK(["Block merge"])

MERGE(["Merge to main"])

PR --> UNIT --> EVAL --> GOLD --> JUDGE --> SCORE --> GATE

GATE -->|Yes| BLOCK

GATE -->|No| MERGE

style EVAL fill:#4f46e5,stroke:#4338ca,color:#fff

style GATE fill:#f59e0b,stroke:#d97706,color:#1f2937

style BLOCK fill:#dc2626,stroke:#b91c1c,color:#fff

style MERGE fill:#059669,stroke:#047857,color:#fff

They specify:

Prompting strategy (zero-shot, few-shot, instruction-tuned)

Number of examples used (e.g., k=5 for few-shot)

Primary metrics reported for each task category

Commonly used metrics include:

Accuracy (knowledge and reasoning tasks)

Pass@k (coding benchmarks)

ROUGE / BLEU (summarization and translation)

This level of transparency prevents misleading comparisons and ensures fairness across models.

3. Rigorous Comparison Against Existing Models

No model exists in isolation. Research papers position new LLMs against:

Leading open-source foundation models

Commercial closed-source systems

Still reading? Stop comparing — try CallSphere live.

CallSphere ships complete AI voice agents per industry — 14 tools for healthcare, 10 agents for real estate, 4 specialists for salons. See how it actually handles a call before you book a demo.

Previous internal model versions

Results are presented in detailed, side-by-side tables that enable objective comparison.

Strong reporting also highlights:

Areas achieving state-of-the-art performance

Domains showing significant improvement

Known limitations and trade-offs

This balanced presentation strengthens trust and technical credibility.

Why This Matters

Structured benchmarking, standardized metrics, and transparent comparisons transform evaluation from opinion into engineering.

For teams building AI products, the takeaway is clear:

Define benchmark categories upfront

Standardize evaluation settings

Track consistent, task-appropriate metrics

Compare against strong and relevant baselines

Moving from “It looks good” to “It is measurably better” is what separates experimentation from production-grade AI.

#AI #MachineLearning #LLM #AIEvaluation #AIResearch #GenerativeAI #MLOps #AIEngineering #ModelBenchmarking

## Showcasing LLM Performance: How Research Papers Present Evaluation Results — operator perspective Practitioners building showcasing LLM Performance keep rediscovering the same trade-off: more autonomy means more surface area for things to go wrong. The art is giving the agent enough room to be useful without giving it room to spiral. That contract is what separates a demo from a production system. CallSphere learned this the expensive way while wiring 37 specialized agents to 90+ tools across 115+ database tables — every integration that didn't enforce schemas at the tool boundary eventually paged someone. ## Why this matters for AI voice + chat agents Agentic AI in a real call center is a different beast than a single-LLM chatbot. Instead of one model answering one prompt, you orchestrate a small team: a router that decides intent, specialists that own a vertical (booking, intake, billing, escalation), and tools that read and write to the same Postgres your CRM trusts. Hand-offs are where most production bugs hide — when Agent A passes context to Agent B, anything that isn't explicit in the message gets lost, and the user feels it as the agent "forgetting." That's why the systems that hold up under load are the ones with typed tool schemas, deterministic state stored outside the conversation, and a hard ceiling on tool calls per session. The cost story is just as important: a multi-agent loop can quietly burn 10x the tokens of a single-LLM design if you let it think out loud at every step. The fix isn't a smarter model, it's smaller agents, shorter prompts, cached system messages, and evals that fail the build when p95 latency or per-session cost regresses. CallSphere runs this pattern across 6 verticals in production, and the rule has held every time: the agent you can debug in five minutes will out-survive the agent that's "smarter" on a benchmark. ## FAQs **Q: What's the hardest part of running showcasing LLM Performance live?** A: Scaling comes from constraint, not capability. The deployments that hold up keep each agent narrow, cap tool calls per turn, cache the system prompt, and pin a smaller model for routing while reserving the larger model for synthesis. CallSphere's stack — 37 agents · 90+ tools · 115+ DB tables · 6 verticals live — is sized that way on purpose. **Q: How do you evaluate showcasing LLM Performance before shipping?** A: Hard ceilings beat heuristics. A maximum step count, an idempotency key on every tool call, and a fallback to a deterministic script when confidence drops below a threshold are what keep the loop bounded. Evals that simulate noisy inputs catch the rest before they reach a real caller. **Q: Which CallSphere verticals already rely on showcasing LLM Performance?** A: It's already in production. Today CallSphere runs this pattern in After-Hours Escalation and Salon, alongside the other live verticals (Healthcare, Real Estate, Salon, Sales, After-Hours Escalation, IT Helpdesk). The same orchestrator code path serves voice and chat — the difference is the tool set the router exposes. ## See it live Want to see real estate agents handle real traffic? Spin up a walkthrough at https://realestate.callsphere.tech or grab 20 minutes on the calendar: https://calendly.com/sagar-callsphere/new-meeting.Try CallSphere AI Voice Agents

See how AI voice agents work for your industry. Live demo available -- no signup required.