Human Judgments and LLM-as-a-Judge Evaluations for LLM

Human Judgments and LLM-as-a-Judge Evaluations for LLM

Zoom-In: Why Controlled Evaluation Metrics Matter for LLMs

As AI systems move from demos to production, one truth becomes clear: model quality cannot be judged by a few prompts and gut feeling.

To build reliable AI products, we need controlled evaluation — standardized and repeatable test cases that measure how a model behaves across scenarios, not just how impressive it looks once.

The Problem With Ad-hoc Testing

Many teams still evaluate models like this:

Try 5–10 prompts

If answers look good → ship it

This approach fails because LLMs are probabilistic. A model that works today may fail tomorrow, or succeed in one domain but collapse in another.

Without structured evaluation:

Regression bugs go unnoticed

Try Live Demo →Try Live →Hear it before you finish reading

Talk to a live CallSphere AI voice agent in your browser — 60 seconds, no signup.

Prompt changes break workflows

Model upgrades silently degrade performance

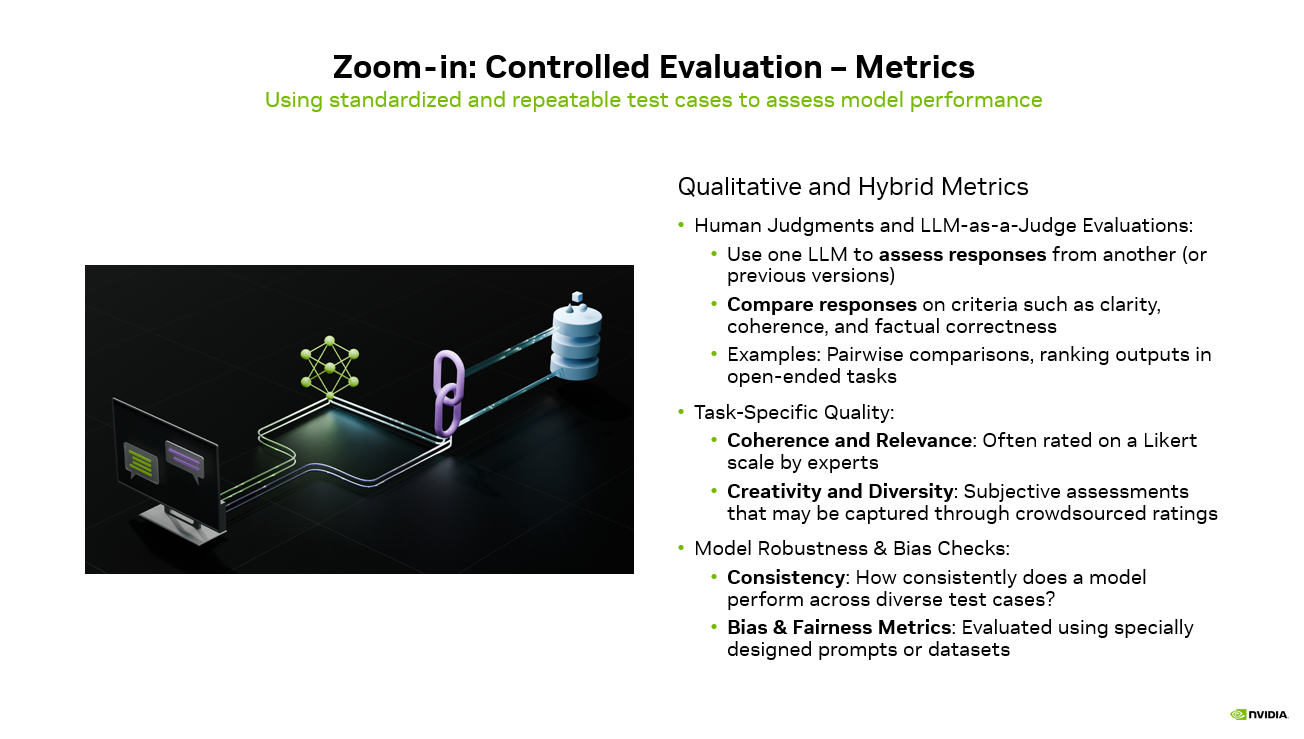

Qualitative & Hybrid Metrics

Controlled evaluation combines human judgment with automated scoring:

flowchart LR

PR(["PR opened"])

UNIT["Unit tests"]

EVAL["Eval harness<br/>PromptFoo or Braintrust"]

GOLD[("Golden set<br/>200 tagged cases")]

JUDGE["LLM as judge<br/>plus regex graders"]

SCORE["Aggregate score<br/>and per slice"]

GATE{"Score regress<br/>more than 2 percent?"}

BLOCK(["Block merge"])

MERGE(["Merge to main"])

PR --> UNIT --> EVAL --> GOLD --> JUDGE --> SCORE --> GATE

GATE -->|Yes| BLOCK

GATE -->|No| MERGE

style EVAL fill:#4f46e5,stroke:#4338ca,color:#fff

style GATE fill:#f59e0b,stroke:#d97706,color:#1f2937

style BLOCK fill:#dc2626,stroke:#b91c1c,color:#fff

style MERGE fill:#059669,stroke:#047857,color:#fff

LLM-as-a-Judge & Human Review

Compare responses across model versions

Rank outputs in open-ended tasks

Evaluate clarity, coherence, and factual correctness

Task-Specific Quality

Coherence & relevance (expert ratings)

Creativity & diversity (crowd assessments)

Still reading? Stop comparing — try CallSphere live.

CallSphere ships complete AI voice agents per industry — 14 tools for healthcare, 10 agents for real estate, 4 specialists for salons. See how it actually handles a call before you book a demo.

Robustness & Safety Checks

Reliable AI must behave consistently:

Consistency across different prompts

Bias and fairness testing using dedicated datasets

Why It Matters

Controlled evaluation turns AI development from guessing → engineering.

Instead of asking “Does it sound smart?” we ask:

Does it improve measurable quality?

Does it stay stable after changes?

Is it safe across edge cases?

Teams that invest in evaluation pipelines ship faster, break less, and trust their models more.

In modern AI development, evaluation is not optional — it is infrastructure.

#AI #MachineLearning #LLM #ArtificialIntelligence #MLOps #AIEvaluation #GenerativeAI #AIEngineering #DataScience #AIProducts #LLMasJudge #HumanInTheLoop

## Human Judgments and LLM-as-a-Judge Evaluations for LLM — operator perspective Treat Human Judgments and LLM-as-a-Judge Evaluations for LLM the way you'd treat any other dependency change: pin the version, run it through your eval suite, watch p95 latency for a week, and only then promote it from canary. For CallSphere — Twilio + OpenAI Realtime + ElevenLabs + NestJS + Prisma + Postgres, 37 agents across 6 verticals — the bar for adopting any new model or API is unsentimental: does it shorten the inner loop on a real call, or just on a benchmark? ## Base model vs. production LLM stack — the gap that costs you uptime A base model is a checkpoint. A production LLM stack is a whole different artifact: eval gates that fail the build on regression, prompt caching that cuts repeated-system-prompt cost by 40-70%, structured outputs that prevent JSON drift on tool calls, fallback chains that route to a smaller-model retry when the primary times out, and request-side guardrails that cap tool calls per session before the loop spirals. CallSphere runs LLMs in tandem on purpose: `gpt-4o-realtime` for the live call (streaming audio in and out, tool calls inline) and `gpt-4o-mini` for post-call analytics (sentiment scoring, lead qualification, summary generation, and the lower-stakes async work that doesn't need realtime). That split is not a cost optimization — it's a reliability decision. Realtime is optimized for low-latency turn-taking; mini is optimized for cheap, deterministic batch scoring. Mixing them lets each do what it's good at without one regressing the other. The teams that struggle with LLMs in production almost always made the same mistake: they treated "the model" as a single dependency, instead of as a small portfolio of models, each pinned to a job, each behind its own eval suite, each with a documented fallback. ## FAQs **Q: Why isn't human Judgments and LLM-as-a-Judge Evaluations for LLM an automatic upgrade for a live call agent?** A: Most of the time it doesn't, and that's the right starting assumption. The relevant test is whether it improves at least one of: p95 first-token latency, tool-call argument accuracy on noisy inputs, multi-turn handoff stability, or per-session cost. CallSphere runs 37 specialized AI agents wired to 90+ function tools across 115+ database tables in 6 live verticals. **Q: How do you sanity-check human Judgments and LLM-as-a-Judge Evaluations for LLM before pinning the model version?** A: The eval gate is unsentimental — a regression suite that simulates real call traffic (noisy ASR, partial inputs, tool-call timeouts) measures four numbers, and a candidate has to win on three of four without losing badly on the fourth. Anything else is treated as a blog post, not a stack change. **Q: Where does human Judgments and LLM-as-a-Judge Evaluations for LLM fit in CallSphere's 37-agent setup?** A: In a CallSphere deployment, new model and API capabilities land first in the post-call analytics pipeline (lower stakes, async, easy to roll back) and only later in the live realtime path. Today the verticals most likely to absorb new capability first are IT Helpdesk and Sales, which already run the largest share of production traffic. ## See it live Want to see after-hours escalation agents handle real traffic? Walk through https://escalation.callsphere.tech or grab 20 minutes with the founder: https://calendly.com/sagar-callsphere/new-meeting.Try CallSphere AI Voice Agents

See how AI voice agents work for your industry. Live demo available -- no signup required.