Why We Need to Introduce New Knowledge in AI Systems

Why We Need to Introduce New Knowledge in AI Systems

Artificial Intelligence systems, especially large language models (LLMs), have transformed how humans interact with technology. However, despite their impressive capabilities, they are not perfect. One of their biggest limitations is the gap between what they know and what they need to know in real-world applications. This gap makes it essential to continuously introduce new knowledge into AI systems.

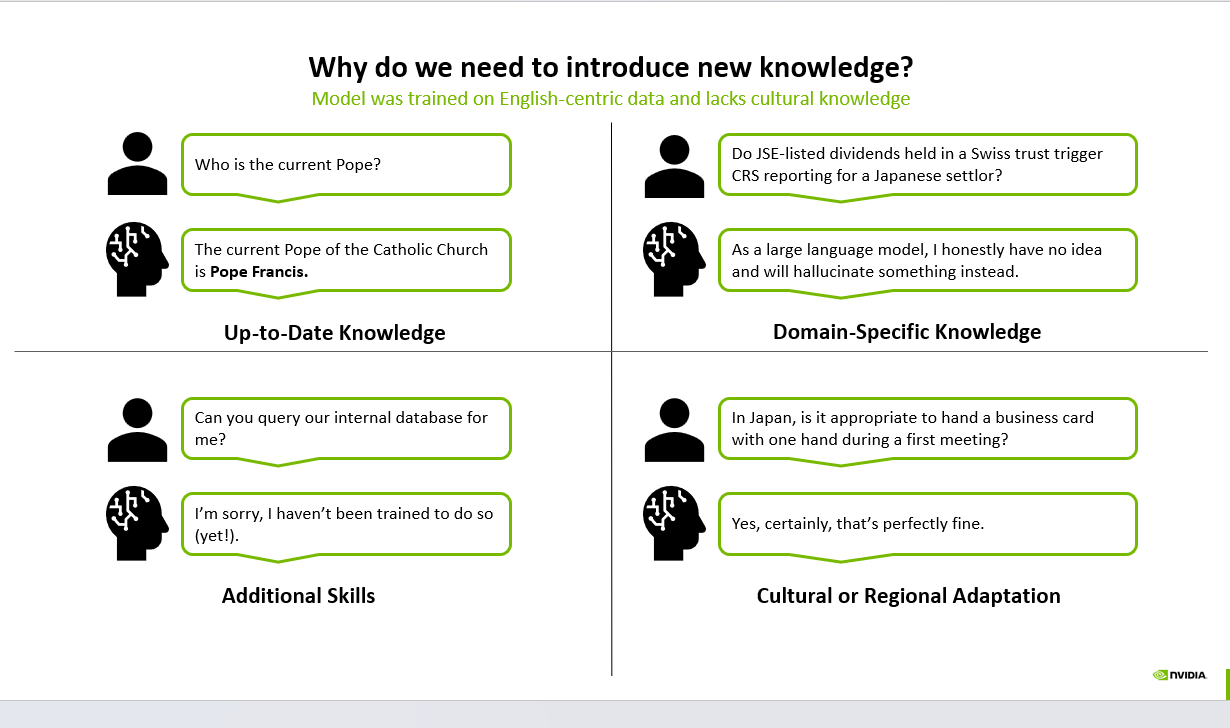

This article explores why updating and enriching AI knowledge is critical, based on four key dimensions: up-to-date knowledge, domain-specific knowledge, additional skills, and cultural adaptation.

1. Up-to-Date Knowledge

AI models are trained on large datasets collected at a specific point in time. This means their knowledge can quickly become outdated.

For example, asking a simple question like "Who is the current Pope?" requires awareness of recent events. If the model hasn’t been updated, it may provide incorrect or outdated information.

Why it matters:

Real-world facts change constantly

Users expect accurate, current answers

Outdated responses reduce trust in AI systems

Solution:

Continuous model updates

Real-time data integration (APIs, search)

Retrieval-Augmented Generation (RAG)

2. Domain-Specific Knowledge

General-purpose AI models often struggle with highly specialized questions.

sequenceDiagram

autonumber

participant Caller as Caller

participant Agent as CallSphere Agent

participant API as CRM API

participant DB as CRM Database

participant Webhook as Webhook Listener

Caller->>Agent: Inbound call begins

Agent->>Agent: STT plus intent detection

Agent->>API: Lookup contact by phone

API->>DB: Read contact record

DB-->>API: Contact and history

API-->>Agent: Personalized context

Agent->>API: Create call activity

Agent->>API: Update deal stage

API->>Webhook: Outbound webhook fires

Webhook-->>Agent: Confirmed

Agent->>Caller: Spoken confirmation

Consider a question like: "Do JSE-listed dividends held in a Swiss trust trigger CRS reporting for a Japanese settlor?"

Hear it before you finish reading

Talk to a live CallSphere AI voice agent in your browser — 60 seconds, no signup.

This requires deep expertise in:

International taxation

Financial regulations

Jurisdiction-specific laws

A general model may not reliably answer such queries and might hallucinate incorrect information.

Why it matters:

High-stakes domains (finance, healthcare, legal)

Incorrect answers can lead to serious consequences

Solution:

Fine-tuning on domain-specific datasets

Expert-curated knowledge bases

Hybrid systems combining rules + ML

3. Additional Skills (Tool Use & Integration)

AI models are not inherently capable of performing actions like querying databases, calling APIs, or interacting with enterprise systems.

For example: "Can you query our internal database for me?"

A standard model cannot do this unless explicitly designed with tool-use capabilities.

Why it matters:

Real-world tasks require execution, not just answers

Businesses need automation, not just conversation

Solution:

Tool-augmented AI (agents)

API integrations

Still reading? Stop comparing — try CallSphere live.

CallSphere ships complete AI voice agents per industry — 14 tools for healthcare, 10 agents for real estate, 4 specialists for salons. See how it actually handles a call before you book a demo.

Function calling and workflow orchestration

4. Cultural and Regional Adaptation

AI models are often trained on English-centric or Western datasets. This creates gaps in cultural understanding.

For instance: "In Japan, is it appropriate to hand a business card with one hand during a first meeting?"

A culturally unaware model might respond incorrectly, even though etiquette in Japan requires using both hands and showing respect.

Why it matters:

Cultural sensitivity is critical in global applications

Incorrect responses can offend users or harm business relationships

Solution:

Multilingual and multicultural training data

Localization layers

Region-specific fine-tuning

The Bigger Picture: From Static Models to Adaptive Systems

The future of AI lies in moving beyond static, pre-trained models toward dynamic, continuously learning systems. These systems should:

Learn from new data in real time

Adapt to specific domains and users

Integrate with external tools and systems

Respect cultural and regional nuances

Conclusion

Introducing new knowledge into AI systems is not optional—it is essential. Without it, AI remains limited, unreliable, and disconnected from real-world needs.

By addressing gaps in timeliness, domain expertise, functional capability, and cultural awareness, we can build AI systems that are not only intelligent but also useful, trustworthy, and globally relevant.

The evolution of AI depends not just on bigger models, but on better knowledge integration.

In the age of AI, knowledge is not static—it’s a continuously evolving asset.

#ArtificialIntelligence #AI #MachineLearning #LLM #GenerativeAI #DataScience #AIInnovation #TechTrends #FutureOfWork #AIEngineering #RAG #AIAgents #DigitalTransformation #KnowledgeManagement #AIForBusiness

Try CallSphere AI Voice Agents

See how AI voice agents work for your industry. Live demo available -- no signup required.