Sesame Voice In 2026: What The Model Does And Where It Fits

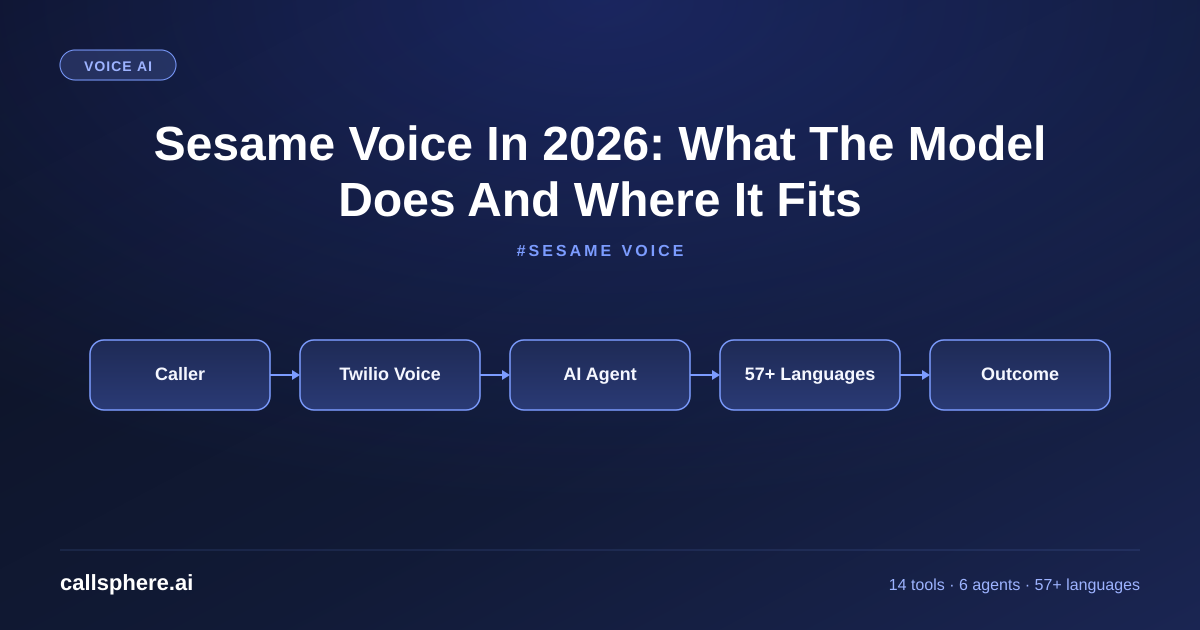

Sesame voice has emerged as a top TTS option in 2026. Here is what the sesame voice model actually is, how it sounds, and where CallSphere uses it.

TL;DR

- Sesame voice is a 2024–2026 TTS model line known for natural prosody and conversational warmth.

- Strengths: emotional inflection, natural pacing, sub-300ms first-byte latency on streaming.

- CallSphere routes to Sesame for use cases where brand voice warmth matters most.

- Starter $149/mo, 14-day free trial, 57+ languages across all our voice work.

This is part of our Customer Service Representative guide.

What sesame voice actually is

Sesame voice refers to the TTS (text-to-speech) model line developed by Sesame AI, originally released in 2024 and refined through several iterations in 2025–2026. It made waves because of one specific quality: the voices sound emotionally alive in a way most TTS models don't. The prosody — the rise and fall of natural speech, the pauses, the emphasis on the right syllable — was a step change from prior generations of TTS.

I run CallSphere, and we route to Sesame voice for specific use cases where warmth and natural inflection matter most — hospitality, healthcare bedside manner, high-empathy customer retention. For pure transactional voice (order status, appointment confirmation), faster and cheaper models from OpenAI or ElevenLabs often suffice. The right TTS isn't always the most expressive one — it's the one that matches the use case.

What makes Sesame distinct in 2026:

- Natural prosody — pauses, emphasis, breath sounds feel organic

- Sub-300ms first-byte latency on streaming, critical for voice agents

- Voice cloning quality — short reference samples (~30 seconds) produce convincing custom voices

- Multilingual support — strong English, growing depth in 15+ other languages

- Conversational tuning — handles interjections, backchannels, mid-sentence corrections well

What is the sesame voice model architecture?

The sesame voice model is a neural TTS architecture combining a language model that predicts acoustic features with a neural vocoder that renders waveforms. The specific innovations Sesame disclosed publicly include attention to dialogue context (the model sees the full conversation, not just the current sentence), and a tuning objective that explicitly optimizes for human listener perception of naturalness, not just transcript fidelity.

Compared to other 2026 TTS models:

- OpenAI TTS — fast, cheap, broad language coverage, less expressive

- ElevenLabs — strong voice cloning, paid premium quality, slightly slower

- Sesame — best-in-class naturalness for conversational use, mid-tier price

- Cartesia / Sonic — competitive on latency, newer to market

- Google / Azure TTS — broad and stable, less conversational warmth

The trade-offs are real. Sesame is not the cheapest, not the fastest, and not the broadest in language coverage. It is the most natural-sounding for English conversational use cases, which is why it earns a spot in CallSphere's routing layer.

Hear it before you finish reading

Talk to a live CallSphere AI voice agent in your browser — 60 seconds, no signup.

When should you use sesame voice vs alternatives?

Three use cases where Sesame is the right pick:

- High-empathy customer interactions — retention, complaint resolution, healthcare patient outreach

- Brand voice consistency — hotels, hospitality, premium service businesses

- Long-form audio — IVR welcome messages, voicemail greetings, audio content

Three use cases where you might pick something else:

- Pure transactional voice — order status, appointment confirmations (OpenAI TTS or Deepgram Aura is cheaper)

- Lowest-possible-latency real-time — Cartesia or specialized streaming TTS

- Heavy non-English coverage — Google or Azure TTS still has wider language depth at the long-tail

CallSphere's routing layer makes this decision per-call based on the agent's vertical, the caller's language, and the conversation context. Healthcare gets a warm Sesame voice; sales qualification gets a faster default voice; international calls route to whichever model has the best coverage for that language.

How CallSphere uses sesame voice in production

The CallSphere voice stack:

- Per-vertical voice selection — Healthcare and Hotel concierge use Sesame by default; Sales and salon booking use faster defaults

- Per-language fallback — Sesame for English, French, German, Spanish; fallback to broader models for the long tail of our 57+ languages

- Streaming integration — sub-300ms first-byte latency on Sesame, kept within our 600ms total turn budget

- Brand voice cloning — Scale tier customers can upload a 30–60s reference clip and we tune a custom voice

- Cost management — Sesame is mid-tier price; we cache common phrases (greetings, confirmations) to keep per-call TTS spend down

- 14 function tools running independently of the TTS layer — the agent's actions don't change with the voice

A real example walk-through

A regional hotel group (4 properties, ~340 rooms total) in upstate NY wanted their AI concierge to sound like a real luxury concierge, not a generic assistant. They moved to CallSphere's hotel concierge agent (Scale tier, $1,499/mo for all 4 properties combined) in March 2026 with Sesame voice routing enabled:

- Voice quality feedback: 89% of surveyed guests rated the AI's voice "very natural" vs 51% on the prior IVR

- Concierge handle time: 4:20 average (vs 6:50 with the prior staffed line)

- Languages handled at natural quality: English, Spanish, French, German, Italian (top 5 of guest language mix)

- Booking conversion on inbound inquiries: up 23% post-deployment

- Net cost change: -$2,800/mo vs the prior outsourced concierge service across 4 properties

The voice quality was the unlock — guests trusted the agent enough to share preferences and let it make real recommendations.

Pricing & how to try it

CallSphere's voice routing (including Sesame for eligible verticals) is included in every tier:

- Starter — $149/mo — 2,000 interactions

- Growth — $499/mo — 10,000 interactions (most popular)

- Scale — $1,499/mo — 50,000 interactions, custom voice cloning available

Annual saves ~15%. 14-day free trial, no card. Setup: 3–5 business days.

Still reading? Stop comparing — try CallSphere live.

CallSphere ships complete AI voice agents per industry — 14 tools for healthcare, 10 agents for real estate, 4 specialists for salons. See how it actually handles a call before you book a demo.

Frequently asked questions

Q: What is sesame voice and why does it sound different from other TTS? A: Sesame voice is a 2024–2026 TTS model line known for natural prosody and emotional inflection. It sounds different because Sesame's training objective explicitly optimizes for perceived naturalness, and the model architecture considers full dialogue context, not just the current sentence. It is one of the top TTS picks for conversational use cases in 2026.

Q: How does the sesame voice model compare to ElevenLabs? A: The sesame voice model beats ElevenLabs on conversational warmth and natural prosody, especially in dialog turns. ElevenLabs has stronger voice cloning fidelity for specific brand voices. CallSphere uses both — Sesame for default conversational warmth, ElevenLabs when a client provides a specific brand voice reference.

Q: What languages does sesame voice support? A: Strong English, with deepening quality in 15+ other languages including Spanish, French, German, Italian, Portuguese, and Mandarin. For languages outside Sesame's strongest set, CallSphere routes to broader TTS models — across our platform we cover 57+ languages with native accent voices.

Q: Is sesame voice good for healthcare voice agents? A: Yes — Sesame's natural warmth fits healthcare bedside-manner use cases well. CallSphere's HIPAA + BAA-ready healthcare agent uses Sesame as the default voice for English patient calls. The voice quality measurably increases patient comfort and information-sharing.

Q: How fast is sesame voice in real-time streaming? A: Sub-300ms first-byte latency on streaming, which fits inside the 600ms total turn budget required for production-grade voice agents.

Q: Can I clone a custom voice with sesame? A: Yes. Sesame supports voice cloning from a 30–60 second reference clip. CallSphere offers this on the Scale tier for brand-specific deployments.

Q: How much does sesame voice cost compared to OpenAI TTS? A: Sesame is mid-tier priced — more than OpenAI's standard TTS, less than ElevenLabs premium voices. For CallSphere customers, all TTS costs are absorbed into the tier pricing; you don't pay per character or per second separately.

Q: When should I not use sesame voice? A: For pure transactional voice (order status, simple confirmations), a faster and cheaper TTS is fine. For long-tail languages outside Sesame's strongest 15+, route to broader models. For ultra-low-latency real-time use cases (gaming, AR), specialized streaming TTS may beat Sesame's latency profile.

Related reading

Try CallSphere AI Voice Agents

See how AI voice agents work for your industry. Live demo available -- no signup required.