Stream Voices: How Realtime Voice AI Streams Audio in 2026

What stream voices means in 2026 - WebRTC, SIP, streaming TTS, and the GPT-Realtime-2 voice agent stack that powers production AI voice apps under 600ms.

TL;DR

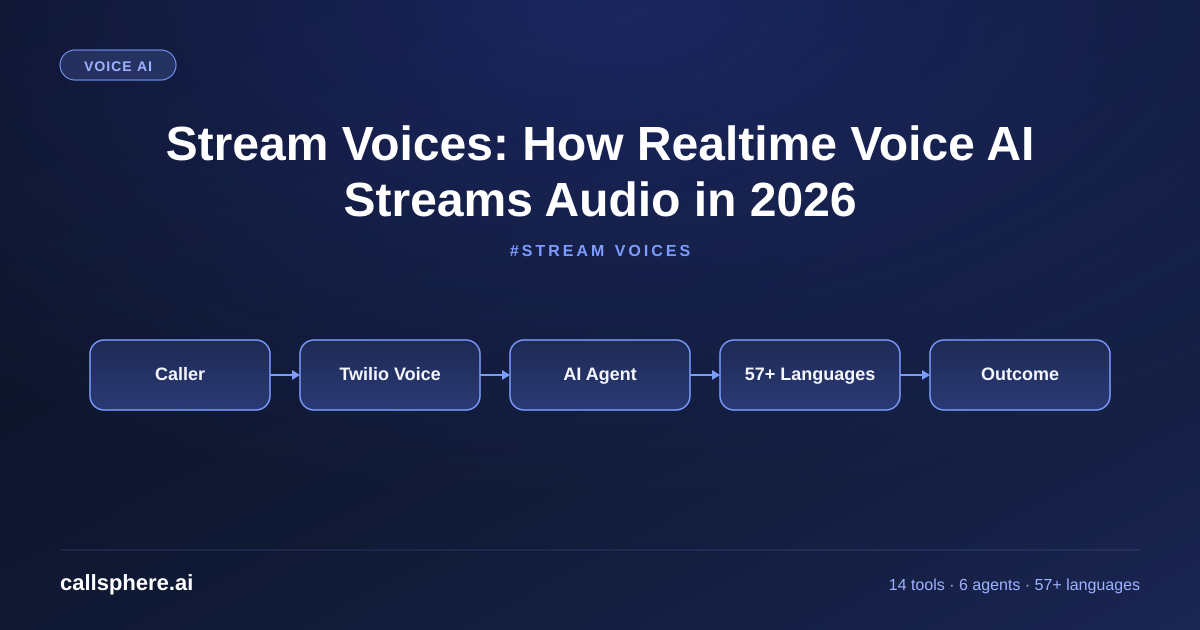

- "Stream voices" in 2026 refers to realtime voice AI that streams audio bidirectionally - the agent listens, thinks, and speaks in a continuous flow rather than turn-by-turn audio file exchange.

- The stack: WebRTC or SIP for transport, GPT-Realtime-2 (or equivalent) for integrated voice reasoning, function-calling for tool use, sub-600ms first-audio latency.

- The shift from chunked TTS/STT to integrated streaming voice models is what made AI phone agents finally usable for production in 2024-2026.

- CallSphere uses streaming voices on every call across our 6 verticalized agents - the technical implementation matters and the user-visible result is "the AI sounds like a human" rather than "the AI sounds like a chatbot reading a script."

This is part of our Siri Voice Generator pillar guide.

What "stream voices" actually means in 2026

"Stream voices" is the search-friendly phrasing for streaming voice AI - the technical architecture where audio flows continuously between the user and the AI agent rather than as discrete request-response exchanges. The old pattern (2020-2022): user finishes speaking, audio file goes to speech-to-text, text goes to LLM, LLM response goes to text-to-speech, TTS audio file plays. Latency: 2-5 seconds. The new pattern (2024-2026): audio streams from user to model continuously, the model produces audio output continuously, both directions overlap.

I ship CallSphere, and the streaming architecture is the single biggest technical change that made AI phone agents go from "novelty" to "production." Sub-600ms first-audio latency is the threshold where callers stop perceiving the agent as slow. Below 800ms it feels natural; above 1,500ms it feels broken. The streaming voice architecture is what gets you below 800ms reliably.

The components of a 2026 streaming voice stack:

- Transport: WebRTC (browser) or SIP/RTP (phone). Both stream audio bidirectionally with low latency.

- Voice model: GPT-Realtime-2 (OpenAI), Gemini Live (Google), Claude voice (Anthropic's realtime voice), or open-source alternatives like Moshi (Kyutai).

- Function-calling: tools that the model can invoke mid-stream without breaking the audio flow.

- Observability: streaming transcripts and tool calls written to a database in near-realtime.

How does WebRTC stream voices versus SIP?

WebRTC and SIP are the two transport layers for streaming voice in 2026. They serve different surfaces:

- WebRTC: browser-native, peer-to-peer (with TURN servers), used for in-app voice chat, web-embedded voice agents, mobile SDK voice. CallSphere uses WebRTC for our admin console live demo and any web-embedded voice widget.

- SIP/RTP: telephony-native, carrier-routed, used for phone calls from the public phone network. CallSphere uses SIP for every PSTN call - patient calls to a clinic, leads calling a real estate brokerage, customers calling a support line.

Both stream audio bidirectionally with similar latency characteristics (typically 100-200ms transport latency on top of the AI processing time). The choice between them is determined by where the user is - browser or phone. Many voice AI platforms support both; CallSphere's same agent runtime handles WebRTC and SIP sessions identically.

The technical implication for buyers: when evaluating a voice AI platform, ask which transport they natively support. Browser-only platforms (no SIP) cannot answer real phone calls. SIP-only platforms cannot embed in your web app. CallSphere ships both.

How does GPT-Realtime-2 stream voices?

GPT-Realtime-2 (OpenAI, released May 2026) is the current state-of-the-art for streaming voice. The key technical changes from earlier models:

Hear it before you finish reading

Talk to a live CallSphere AI voice agent in your browser — 60 seconds, no signup.

- 128K context window (up from 32K) - holds the full conversation, tool history, and system prompt without truncation.

- Integrated TTS + reasoning + STT in a single model rather than three separate components. No chained latency.

- 32K max output tokens - sufficient for long agent turns when needed.

- Cached input at $0.40 per 1M tokens - the cost lever that makes long instruction-heavy voice agents economically viable.

- GPT-5-class reasoning under the hood - the model handles complex tool decisions mid-stream.

For CallSphere this is the production voice engine on every call. We hit sub-600ms first-audio latency p95, which is the threshold where callers do not perceive the AI as slow. The 128K context lets us run rich system prompts (policy, FAQ, escalation rules, voice persona) inline without RAG-ing fragments mid-call.

The competitive landscape in 2026: GPT-Realtime-2 is the production leader. Gemini Live (Google) is competitive on latency. Anthropic's Claude realtime voice (announced March 2026) is gaining production traction. Moshi (Kyutai, open-source) is the strongest self-hosted option for teams that cannot use US-based APIs for data sovereignty reasons.

Why does streaming voice matter for production AI agents?

The user-visible difference between streaming voice and the old chunked pattern is dramatic:

- Interruption handling: in a streaming model, the agent stops talking the moment the user starts talking. Old chunked TTS would talk over interruptions because it was playing a pre-rendered audio file.

- Natural pacing: streaming models produce speech with realistic pauses, breaths, "uh"s, and variable speed. Chunked TTS sounded uniformly paced.

- Tool calls mid-conversation: the agent can invoke a function tool (look up a customer, check inventory, book an appointment) without breaking the audio flow.

- Multi-turn coherence: 128K context plus streaming means a 45-minute call feels as coherent as a 3-minute one.

- Latency floor: sub-800ms first-audio is the threshold where callers do not consciously notice the AI is processing. Chunked architectures sat at 2-5 seconds.

The economic implication: streaming voice agents have call-completion rates of 60-85% in production (CallSphere customer averages). Chunked-architecture predecessors sat at 20-40%. The 2-3x improvement in completion is the difference between "usable in production" and "novelty demo."

How CallSphere uses streaming voice in production

The CallSphere streaming stack:

- GPT-Realtime-2 as the voice engine across all 6 verticalized agents (healthcare, real estate, sales, salon, after-hours, hotel).

- WebRTC for in-browser sessions (admin demo, web-embedded widget, mobile SDK).

- SIP/RTP via STIR/SHAKEN A-attested carriers (Twilio, Bandwidth) for PSTN calls.

- 14 function tools that the streaming model can invoke mid-call without breaking audio flow - book_appointment, lookup_customer, transfer_to_human, schedule_callback, etc.

- 57+ languages with auto-detection on the first utterance; the streaming model switches voice and locale mid-call without a perceptible pause.

- Sub-600ms first-audio latency p95 - the threshold below which callers perceive the agent as natural.

- 20+ Postgres tables capturing the streaming transcript, function-tool calls, and outcome labels in near-realtime for replay and QA.

- Observability: every call replayable in the admin UI with the prompt, the streaming transcript, and the tool calls visible.

Setup is 3-5 business days. You do not configure the streaming stack - it is the default architecture on every CallSphere call.

See streaming voice agents live with a 14-day free trial - no card required →

A real example walk-through

A 40-clinic urgent care network in Florida was running an older AI voice agent built on the 2023 chunked TTS/STT/LLM pattern. Callers consistently reported the agent felt slow and would interrupt them. Call completion was at 38%. They evaluated three alternatives in March 2026: stay on the chunked vendor and wait for upgrades, build their own GPT-Realtime-2 stack, or move to CallSphere.

We deployed the CallSphere healthcare agent in 5 business days. The streaming architecture immediately fixed two of the three reported issues:

- First-audio latency dropped from ~2.4 seconds to ~580ms p95

- Interruption handling improved (the agent stopped talking when the patient started talking)

- Call completion went from 38% to 71% in the first 30 days

The third issue (the agent occasionally mis-routing complex insurance questions) was a prompt issue, fixed in a follow-up week.

Still reading? Stop comparing — try CallSphere live.

CallSphere ships complete AI voice agents per industry — 14 tools for healthcare, 10 agents for real estate, 4 specialists for salons. See how it actually handles a call before you book a demo.

Cost: $1,499/mo CallSphere Scale tier replacing $3,800/mo on the previous vendor. Net win on cost plus a 33-point lift in completion rate.

Pricing and how to try CallSphere

CallSphere is flat-monthly, no per-minute, no per-seat:

- Starter $149/mo - 2,000 interactions, 1 agent, 1 number

- Growth $499/mo - 10,000 interactions, 3 agents (most popular)

- Scale $1,499/mo - 50,000 interactions, unlimited agents, BAA on request

- 14-day free trial, no card required, 3-5 business days to live

Compare CallSphere streaming voices to other platforms →

Frequently asked questions

What does "stream voices" mean in AI voice? "Stream voices" refers to streaming voice AI - audio flows continuously between the user and the AI agent rather than as discrete request-response chunks. The user speaks, the model processes the audio in flight, the model produces audio output continuously while still listening. This architecture is what enables sub-600ms first-audio latency and natural interruption handling. The 2026 leaders are GPT-Realtime-2 (OpenAI), Gemini Live (Google), and Claude realtime voice (Anthropic). CallSphere uses GPT-Realtime-2 on every customer call.

Why is streaming voice better than traditional TTS/STT? Streaming voice integrates speech-to-text, language model reasoning, and text-to-speech in a single model rather than chaining three separate components. The latency advantage is dramatic: sub-600ms first-audio versus 2-5 seconds for chained architectures. The user-experience advantage is interruption handling, natural pacing, breath sounds, and mid-call tool calls without breaking the audio flow. In production, streaming voice agents have call-completion rates 2-3x higher than chunked predecessors.

What is GPT-Realtime-2 and why does it matter for stream voices? GPT-Realtime-2 is OpenAI's May 2026 release of their streaming voice model. The key changes: 128K context window (up from 32K), 32K max output tokens, integrated TTS and reasoning in one model, GPT-5-class reasoning, $0.40/1M cached input pricing. For production voice agents this is the current state-of-the-art - sub-600ms first-audio latency, natural interruption handling, full conversation context for 45+ minute calls. CallSphere uses GPT-Realtime-2 across all 6 verticalized agents.

Can streaming voice work over a regular phone call? Yes. Streaming voice agents run over SIP/RTP for PSTN phone calls - the same protocol used by VoIP phone systems. The transport adds ~100-200ms of network latency on top of the AI processing time, so first-audio latency on a phone call is typically 500-800ms versus 400-600ms on browser-based WebRTC. CallSphere uses SIP through STIR/SHAKEN A-attested carriers (Twilio, Bandwidth) for every customer's main phone line.

How does WebRTC fit with streaming voice agents? WebRTC is the browser-native transport for streaming voice. Used for in-app voice chat, web-embedded voice widgets, and mobile SDK voice integrations. It is peer-to-peer (with TURN servers for NAT traversal) and has lower transport latency than SIP for browser-to-browser calls. CallSphere uses WebRTC for our admin console live demo and any customer who embeds the voice agent in their web app.

What is the latency floor for streaming voice in 2026? Sub-600ms first-audio latency p95 is the production target in 2026. Below 600ms feels indistinguishable from a human pickup. 600-800ms feels natural. 800-1200ms feels noticeable but acceptable. Over 1500ms feels broken. CallSphere hits ~580ms p95 on PSTN calls and ~420ms p95 on WebRTC. The latency floor is set by the model itself (typically 300-400ms of processing) plus transport latency (100-300ms).

Are there open-source alternatives to GPT-Realtime-2 for streaming voice? Yes. Moshi (Kyutai, open-source) is the strongest self-hosted streaming voice model in 2026. Llama Audio (Meta's voice variant) is competitive for English. NVIDIA NeMo has streaming voice components. The tradeoff: open-source models lag GPT-Realtime-2 by ~6-12 months in quality, and self-hosting requires meaningful GPU infrastructure. For teams with data-sovereignty requirements that prevent using US-based APIs, open-source is the right path. For everyone else, the API-based commercial models win on cost and quality.

Does CallSphere support both WebRTC and SIP for stream voices? Yes. The same CallSphere agent runtime handles WebRTC sessions (browser, mobile SDK) and SIP sessions (PSTN phone calls) identically. The audio transport differs; the model, function tools, and observability layer are the same. This matters for customers who want a voice agent on both their main phone line (SIP) and their website (WebRTC) - it is one configuration, one prompt, one set of function tools.

Related reading

Try CallSphere AI Voice Agents

See how AI voice agents work for your industry. Live demo available -- no signup required.