Agent GPT Explained: What These AI Agents Actually Do in 2026

Agent GPT in 2026 means LLMs that call tools, hold state, and finish work. Here is a founder's plain-English walkthrough with real CallSphere examples.

TL;DR

- Agent GPT in 2026 is shorthand for an LLM that takes goals, picks tools, and executes — not just chats.

- The original AgentGPT project popularized the term in 2023; the production reality in 2026 is a managed runtime with audited tool calls.

- CallSphere runs 6 production "agent GPTs" — each is a prompt + a tool registry + a memory store, on GPT-Realtime-2 with a 128K window.

- If you want to ship one for your business in 5 days instead of 5 months, that is what we built.

This is part of our Customer Service Representative Playbook guide.

What does "agent GPT" actually mean in 2026?

When people search for agent GPT, they usually want to understand one of two things: (1) the AgentGPT open-source project from 2023, or (2) the general concept of GPT-based AI agents that can act, not just talk. The latter is what matters today.

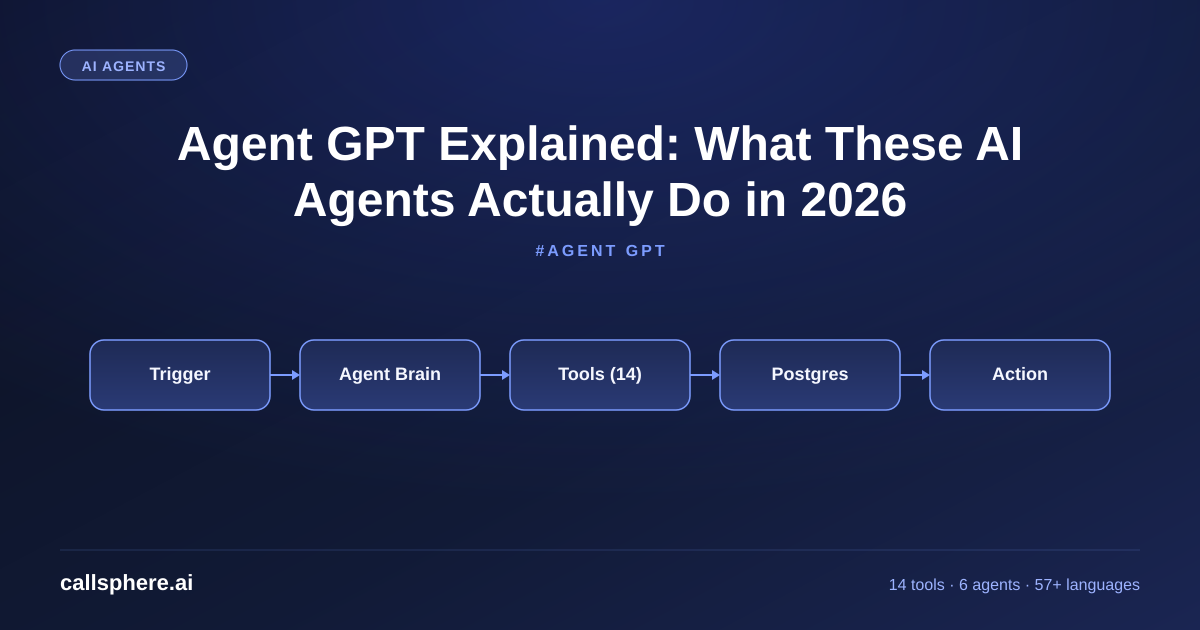

In 2026, an agent GPT is three pieces stacked together. A system prompt that defines the agent's job, policy, and tone. A registry of function tools the agent can call — calendar APIs, CRM writes, payment captures. And a memory store that holds conversation history plus durable facts about the user. The LLM (GPT-4, GPT-5, or GPT-Realtime-2) is the engine; the agent is the engine plus the tooling plus the state.

I built CallSphere on this exact pattern. Our healthcare agent has a 4,200-token system prompt, 9 function tools, and a per-caller memory record. It answers calls in 600ms and resolves 71% of them without human escalation. That is what agent GPT looks like at production scale.

What is the difference between AgentGPT the project and a production AI agent?

AgentGPT (the 2023 open-source project at agentgpt.reworkd.ai) was a milestone. It showed that you could chain LLM calls into autonomous goal-pursuit. It is still a useful sandbox. But it was never a production runtime — no audit trail, no rate limiting, no fault tolerance, no multi-tenant isolation, no HIPAA controls.

A 2026 production agent — like one of CallSphere's 6 — has:

- Audited tool calls written to a

tool_callstable - Per-call latency budgets with automatic degradation paths

- Multi-tenant isolation at the session level

- Verticalized prompts tuned against 500+ real fixtures

- Failover to a secondary model when the primary 5xx's

- A human-in-the-loop escalation interface

That is not a weekend project. That is what you pay a platform for.

How does function calling power agent GPT in practice?

Function calling is the mechanism that turns an LLM from a chatbot into an agent. Instead of replying in natural language, the model emits a JSON object naming a function and its arguments. Your runtime executes that function, returns the result, and the model continues the conversation.

Hear it before you finish reading

Talk to a live CallSphere AI voice agent in your browser — 60 seconds, no signup.

CallSphere's 14 function tools span four categories:

- Scheduling:

book_appointment,reschedule,cancel,find_slots - CRM:

lookup_contact,create_lead,update_lead_status,add_note - Communication:

send_sms,send_email,schedule_callback - Escalation:

transfer_to_human,flag_for_review,end_call

When a caller says "I need to reschedule Tuesday's appointment to Thursday afternoon," the agent calls find_slots with Thursday's date filter, calls reschedule with the new slot, then confirms verbally. Three tool calls, one minute of caller time, zero human involvement.

How CallSphere does this in production

Architecturally, every CallSphere agent is the same shape: a TypeScript runtime holding a GPT-Realtime-2 session, a prompt loaded from agent_prompts, a tool registry filtered by the agent's vertical_scope, and a memory layer that reads/writes to conversations and tool_calls.

The 20+ Postgres tables form a clean separation of concerns: agents (the 6 verticals), tools (the 14 functions), agent_tools (which agent can call which tool), call_sessions (every active call), messages (every utterance), tool_calls (every function invocation with input/output/latency), escalations (every human handoff). Observability is built in because the schema demands it.

When buyers ask "is this just a wrapper around ChatGPT?" — no. ChatGPT is a consumer chat product. CallSphere is a production agent runtime with verticalized prompts, audited tool calls, HIPAA controls, and per-vertical voice models. Same underlying model family; very different operational surface.

A real example walk-through

A solo dental practice in Sacramento went live on CallSphere's healthcare agent on May 5, 2026. The owner was tired of missing after-hours calls and paying $890/mo to an answering service that read "the office is closed" and took messages.

We ported the number. We loaded the practice's FAQ — hours, insurance accepted, location, common procedures, cancellation policy — into the agent prompt. We wired the calendar via the practice's existing Dentrix integration. Go-live: 4 business days.

In the first 14 days, the agent took 287 after-hours calls. It booked 41 first-time appointments. It rescheduled 22 existing ones. It flagged 11 calls as "needs human review" — mostly insurance edge cases. Zero PHI leaks. Zero unhandled errors.

The owner's prior $890/mo answering service is canceled. CallSphere Starter at $149/mo replaced it. Net savings: $741/mo. Plus 41 new patient appointments that would otherwise have hit voicemail.

Still reading? Stop comparing — try CallSphere live.

CallSphere ships complete AI voice agents per industry — 14 tools for healthcare, 10 agents for real estate, 4 specialists for salons. See how it actually handles a call before you book a demo.

Pricing and how to try it

CallSphere's agent platform is $149/mo Starter (1 agent, 2,000 interactions), $499/mo Growth (3 agents, 10,000 interactions, most popular), and $1,499/mo Scale (unlimited agents, 50,000 interactions). Annual saves ~15%. 14-day free trial, no credit card.

If you want to spin up an agent against your real workflow today, sign up and follow the onboarding wizard — it takes about 12 minutes to a working staging agent.

Start your agent in 14 days free →

Frequently asked questions

What is agent GPT and how does it work? Agent GPT is a generic term for a GPT-based AI agent that can take actions through tool calls, not just generate text. It works by combining a large language model (the brain) with a registry of functions (the hands) and a memory store (the memory). When a user makes a request, the agent's model decides which function to call, your runtime executes it, and the model continues with the result. Production agent GPTs add audit logs, error handling, and multi-tenant isolation on top.

Is agent GPT the same as AutoGPT and BabyAGI? They share DNA. AutoGPT and BabyAGI (both 2023) popularized autonomous LLM agents that could plan multi-step goals. AgentGPT was a web UI version of the same idea. In 2026, the architecture has matured into production-grade managed platforms — like CallSphere — that prioritize reliability, audit, and verticalization over open-ended autonomy. The underlying pattern (prompt + tools + memory) is the same; the production discipline is much higher.

Can I build my own agent GPT instead of using a platform? Yes, and the building blocks are well-documented. You need the OpenAI Realtime API (or Anthropic's MCP for tool calling), a TypeScript or Python runtime, a Postgres database for state, and a tool registry. Realistic build time for one production-grade agent: 8–14 engineer-weeks, including observability and error handling. CallSphere replaces that with a 3–5 day go-live.

What models power agent GPT in 2026? Most production agents run on GPT-Realtime-2 (for voice), GPT-5 (for complex reasoning chat), or Claude 3.5 / 4 Sonnet (for long-context analysis). CallSphere defaults to GPT-Realtime-2 with its 128K context window for voice, and we benchmark against GPT-5 on text-heavy back-office agents. Model choice matters less than prompt quality and tool design.

How safe is agent GPT for sensitive data? Safe enough for HIPAA, GDPR, and SOC 2 if the platform is built for it. CallSphere signs BAAs, stores data in encrypted Postgres with row-level access, supports EU-region inference on request, and never trains on customer data. The risk is not the LLM — it is the integration surface. Lock down your tool registry, audit every call, and review the platform's compliance posture before signing.

Can agent GPT make decisions without human approval? Yes, within bounds defined by its prompt and tool permissions. CallSphere's healthcare agent books appointments autonomously but escalates anything insurance-related to humans. Our sales agent qualifies leads but never closes deals over $X without escalation. The agent's "autonomy" is just a configuration on which tools it can call without confirmation.

What is the cheapest way to try agent GPT? CallSphere's 14-day free trial costs nothing and gives you a working production agent in your account in under an hour. AgentGPT.reworkd.ai is free for experimentation but is not production-grade. The OpenAI Playground lets you prototype function calling for free for the first $5–$10 of usage.

How do agent GPTs handle long conversations? The 2026 generation handles long conversations well because GPT-Realtime-2 has a 128K context window — about 45–50 minutes of dense voice or a few hundred chat messages. Older agents on 32K windows truncated context silently. If you are evaluating a platform, ask "what is the model's context window?" and "what happens when it fills?" — the answers tell you whether you are buying 2026 tech or 2024 tech.

Related reading

Try CallSphere AI Voice Agents

See how AI voice agents work for your industry. Live demo available -- no signup required.